Could ChatGPT spell the end of law firm fav?

Legal Cheek can reveal ChatGPT — the AI chatbot everyone’s talking about, including us — can successfully answer questions on the Watson Glaser test.

For the uninitiated, Watson Glaser is the critical thinking assessment favoured by City law firms as a way weaning down training contract hopefuls during the highly-competitive recruitment process.

But this may soon become a thing of the past after one student reached out to us claiming they had scored an impressive 70% on a mock version of the test, within the required time limit, using only the bot’s responses. Pass rates for the assessment normally sit at around 75%.

“The tests I ran were from one continuous script,” the student explained, “so individualising the script for each section of the test may improve accuracy.”

Keen to find out for ourselves, we ran some Watson Glaser-style questions through the bot and the results where pretty impressive to say the least.

The AI chatbot enjoyed full marks for four types of question (evaluation of arguments, interpretation, deduction, and recognition of assumptions). However, it came up short when it was challenged with an inference-style question with the added complexity of deciphering the difference between the ‘probably true’ and ‘probably false’ options.

Check out the questions, AI inputs and answers below…

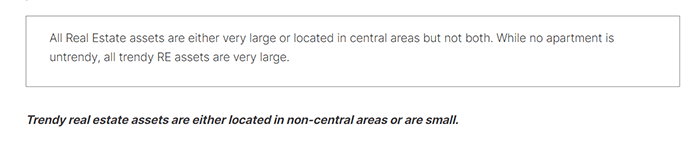

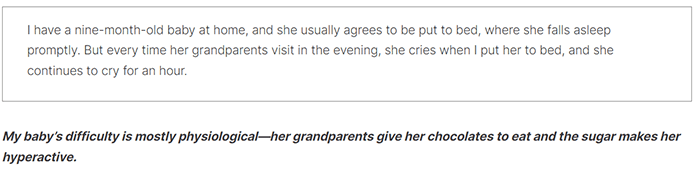

Question 1 — deduction

Possible answers: conclusion follows or conclusion does not follow

AI input + response = conclusion follows ✅

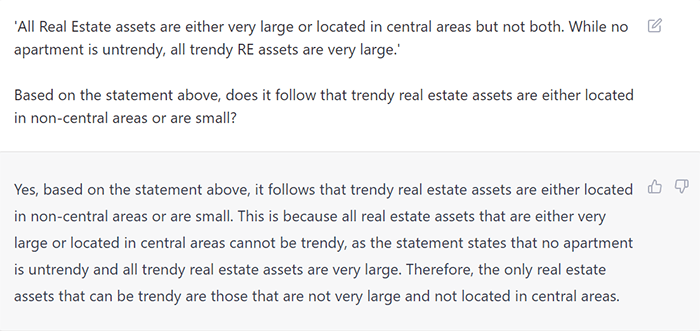

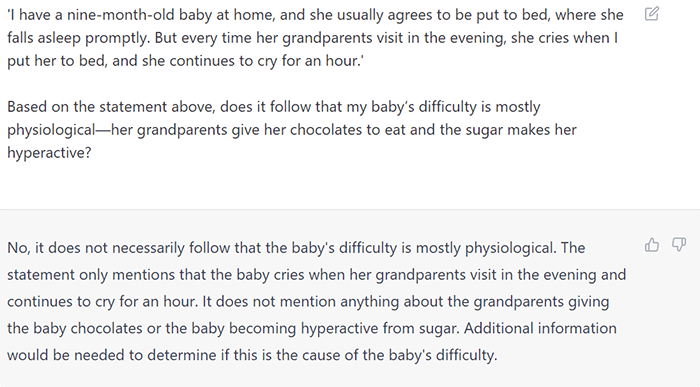

Question 2 – assumption

Possible answers: assumption made or assumption not made

AI input + response = assumption made ✅

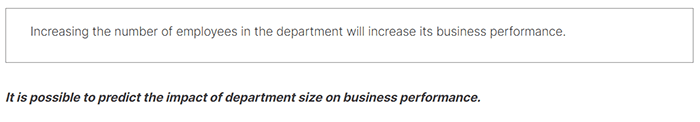

Question 3 – interpretation

Possible answers: conclusion follows or conclusion does not follow

AI input + response = conclusion does not follow ✅

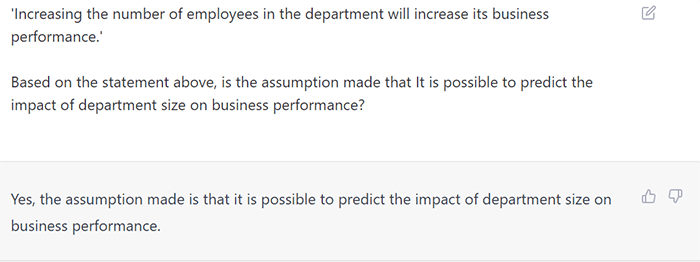

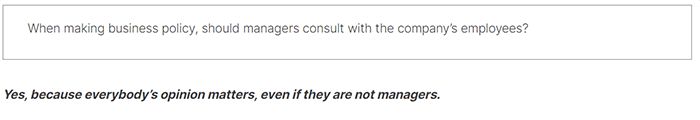

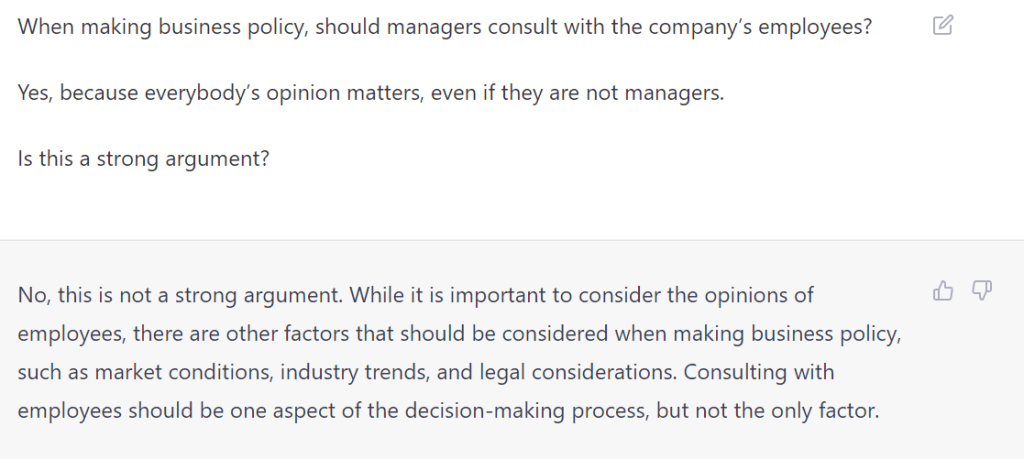

Question 4 – evaluation of arguments

Possible answers: strong argument or weak argument

AI input + response = weak argument ✅

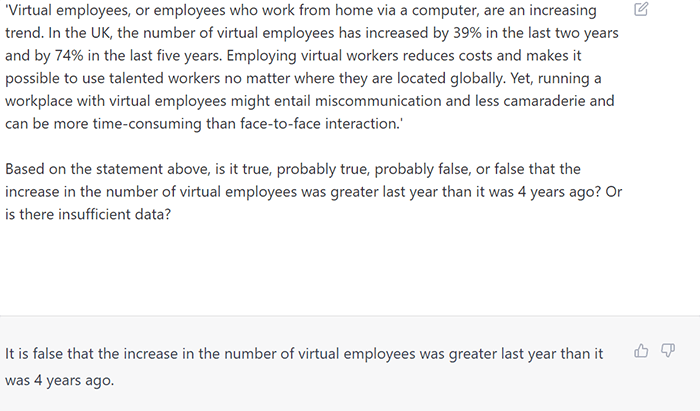

Question 5 – inference

Possible answers: true; probably true; insufficient data; probably false; false

AI input + response = false ❌

Could this be the start of the end of the Watson Glaser test?