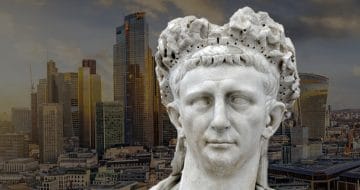

Have we replaced the authority of God with algorithmic-decision-making?

In the latest instalment of a mini-series that provides a historical perspective to the legal quandaries of the present, future magic circle trainee Will Holmes argues that we should ensure that there is sufficient oversight over certain technological advancements in the justice system to avoid granting these tools God-like authority.

The quest for perfect justice has, at times, seen civilisations appeal to higher powers. At several points in Ancient Greek history, oracles were such an authority. Nowadays these oracles are often misunderstood as a form of horoscopic madness. But, in fact, their role was less about predicting the future and more about serving a judicial function. As Catherine Morgan shows, ancient Greek oracles were regulatory mechanisms which enabled community authorities to resolve particularly difficult issues.

These institutions were essentially master arbitrators. Using riddling responses that were open to interpretation, only accepting questions in imprecise formats and being especially sensitive to the public opinion, priests were known for being skilled at getting those who consulted them to virtually answer their own question. Accordingly, Euripides stated “the best prophet is the man who’s good at calculating what is probable”. Theatrics also created a powerful and persuasive aura around consultations. In short, behaviour and psychology were the playground of oracular jurisprudence.

Thankfully we live in enlightened times, you may be thinking. But there appear to be certain instances where technology has become tantamount to an ancient Greek oracle. The academic Ian Bogost argues that today we have culturally replaced the authority of God with algorithmic-decision-making (ADM). In his words, “the next time you hear someone talking about algorithms, replace the term with “God” and ask yourself if the meaning changes”.

This tendency is becoming increasingly prevalent in and around legal institutions. A good example of this is the widely-reported study where researchers were able to use Natural Language Processing and Machine Learning to successfully “predict” the outcomes of 584 cases heard at the European Court of Human Rights with 79% accuracy. Miraculous, right?

However, as Frank Pasquale and Glyn Cashwell argue, there is some doubt about how “predictive” the study really was. Like ancient Greek oracular priests, who did the best they could to conjure a mirage of foreknowledge, the researchers used materials the judges had already released with the decision as their input. As Pasquale and Cashwell pithily note, “this is a bit like claiming to “predict” whether a judge had cereal for breakfast yesterday, based on a report of the nutritional composition of the materials on the judge’s plate at the exact time she or he consumed the breakfast”.

Beyond the misnomer of “prediction”, the exclusion of cases where the law entirely determined the outcome and Machine Learning’s inability to comprehend the meaning of words in their specific legal context cast further doubt on the experiment. Indeed, it could even create random correlations that purport unfounded biases. And whilst this is just research for now, the study suggests that this tech could be used not only to predict judgments, but also to help courts to select which cases they should hear and direct judges in their decision making. And when you consider the possibility of this predictive technology being used by litigation funders and law firms, the consequences of any flaws in the system becomes yet more troubling.

So, why are we turning to technology for justice? For ancient Greek oracles, as Whittaker suggests, their function was to preserve the social fabric of what Durkheim termed the “moral community”. A similar trend can be perceived in 12th century English trials by ordeal. In a society where the law was intertwined with theology, justice could be teased out of the divine arbiter through a variety of different ritualistic tests.

Today, a swathe of research on judicial biases has increased doubts about a human’s propensity for fairness, as Legal Cheek reported earlier this year. As Dr Brian M. Barry explains in How Judges Judge, it can be somewhat “unnerving” to see just how susceptible those on the bench can be to “cognitive biases, prejudices and error”. It is therefore unsurprising that some are touting algorithms in the form of robot judges as the tool to “usher in a new, fairer form of digital justice whereby human emotion, bias and error will become a thing of the past”.

The common thread that links ancient Greek oracles, 12th century trials by ordeal and today’s predictive algorithms is a desire to obtain justice even in the most difficult circumstances. But if history teaches us anything in this regard, it is to proceed with caution.

Ultimately, in the case of ancient Greek oracles and 12th century trials by ordeal, the critics eventually won out, but not without significant long-term societal changes. Indeed, Socrates, perhaps the greatest critic of time, was lulled into acknowledging the authority of the Delphic oracle, with the sceptical Sophist movement becoming a key catalyst for the decline or oracular jurisprudence.

In England, the likes of the influential medieval theologian Peter the Chanter were keen to highlight divinely-ordained miscarriages of justice. In one case, Peter records how a pilgrim returned from pilgrimage without his fellow traveller, failed his trial by ordeal and was executed for murder. The unfortunate pilgrim had naturally expected omniscient God to know that the crime had never taken place. Much to the horror of all involved, shortly after the execution the pilgrim’s fellow traveller bumbled home safe, sound and very much alive.

Although technology has great potential to improve justice, there must be sufficient oversight and remedy available so that, in striving for justice, we don’t fashion a mirage instead.

Will Holmes is a future magic circle trainee.

For the latest news, commercial awareness insight, careers advice and events:

Sign up to the Legal Cheek Newsletter